(This is a continuation of the series of articles on Independent Data Auditors which emanated from the Event on June 6)

Financial audit professionals have long relied on a system of Peer Review Audits to preserve the integrity, credibility, and quality of the audit profession.

A Peer Review is an independent evaluation of an auditor’s work conducted by qualified professionals who were not involved in the original audit engagement. The objective is not to substitute the judgment of the original auditor, but to assess whether the audit was performed in accordance with accepted standards, regulatory requirements, and established professional practices.

During a peer review, experienced auditors examine the audit methodology, working papers, evidence collection procedures, documentation practices, and reporting conclusions. Such reviews help determine whether the audit process met the expected standards of professional diligence and competence. The process enhances confidence in audit outcomes and promotes continuous improvement within the profession.

In many professions, peer review forms part of a broader quality assurance framework and serves as an important mechanism for maintaining public trust in the audit process.

Peer Review in the FDPPI Framework

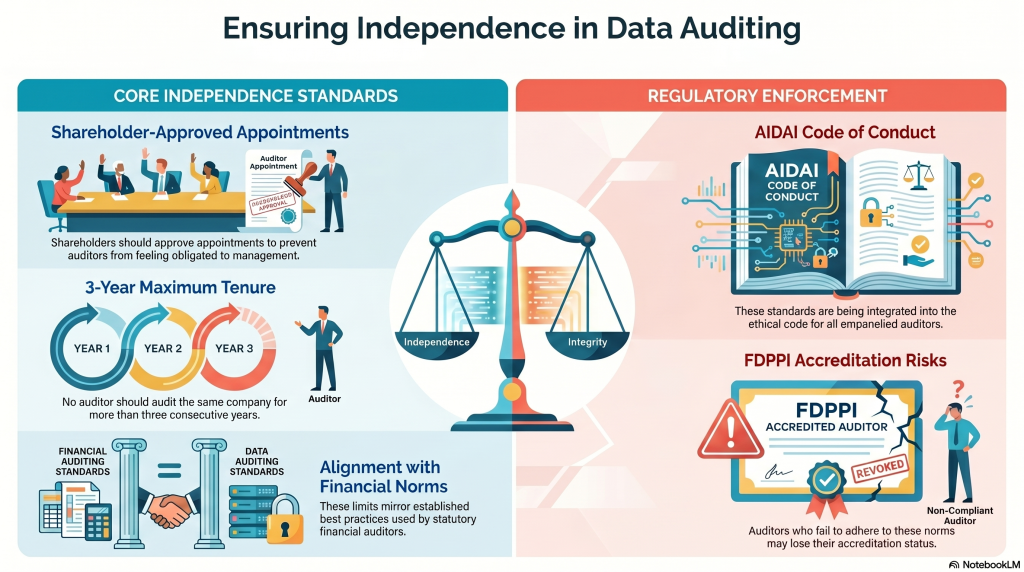

As FDPPI develops its framework for DPDPA compliance audits, elements of the peer review concept are being incorporated as a recommended best practice.

Under the FDPPI framework, audit firms may be recognized as Certified Audit Firms for conducting DPDPA audits. Upon completion of an audit, the auditor is expected to submit a Data Trust Score (DTS) report and related audit records. These records may be retained by FDPPI for quality assurance purposes and may be referred for a peer review when circumstances warrant.

Simultaneously, the auditee is encouraged to provide feedback regarding the audit engagement. The availability of inputs from both the auditor and the auditee may occasionally reveal inconsistencies, misunderstandings, or concerns that merit an independent examination. In such situations, FDPPI may recommend a peer review process.

It is important to emphasize that FDPPI does not seek to substitute its judgment for that of the auditor or interfere with the auditor’s professional independence. The purpose of peer review is solely to strengthen the credibility and reliability of the audit ecosystem through constructive quality assurance.

Ethical Foundation

The peer review concept is being proposed as part of the evolving Code of Ethics for Independent Data Auditors under the framework of the Association of Independent Data Auditors of India (AIDAI). These principles may be incorporated into the ethical commitments undertaken by auditors as well as into engagement agreements between auditors and their clients.

At present, these remain voluntary professional standards. Neither FDPPI nor AIDAI possesses statutory authority to enforce such ethical obligations. Their effectiveness therefore depends largely on the willingness of auditors to embrace them as part of their professional responsibility.

Beyond Regulation: The Need for Self-Governance

The long-term strength of any profession depends not merely on external regulation but on the internal values of its practitioners. Ethical conduct becomes meaningful only when it is voluntarily adopted and consistently practiced.

FDPPI therefore urges all empanelled auditors to embrace peer review and similar quality assurance measures as part of a commitment to professional excellence. The objective is not compliance with an external mandate, but the cultivation of a culture of integrity, transparency, accountability, and continuous improvement.

Ultimately, the effectiveness of an Independent Data Auditor is determined not only by technical competence but also by the auditor’s commitment to ethical self-governance. In that sense, the profession requires not merely training and certification, but an “inner engineering” that aligns professional conduct with the larger objective of building trust in the digital ecosystem.

A profession earns public trust not through regulation alone, but through the willingness of its members to hold themselves accountable to standards that are often higher than those imposed by law.

Naavi